Cay S. Horstmann

Department of Computer Science

San Jose State Universitycay.horstmann@sjsu.edu

Learning how to program a computer is of increasing importance to students from many majors, but it is an intellectually demanding activity. San Jose State University has limited capacity in offering the “Introduction to Programming” course on campus and is unable to keep up with demand. After a largely successful experiment of offering the course as a MOOC through Udacity in Summer 2013, the SJSU CS department is offering it as a “SPOC” (small private online course) in Spring 2014. This redesigned version of the course has more practice and interaction. Our aim is to have parity in pass rates and student success between the online and regular offering.

CS46A is the first course in the computer science major at SJSU, but we also serve a substantial number of non-majors. About a third of the students are majors in some other program. Many are undeclared because we do not admit many students into the CS program until after they have passed this course.

The department strives for a consistent experience in multiple sections of CS46A. Design and implementation decisions for the course are collectively made by a course coordinator, the course instructors, and a curriculum committee, and uniformly applied to all sections. (When I write “we” in this document, it refers to this common practice. When I write “I”, it refers to the redesign of the online section only.)

The subject matter of the course is an introduction into computer programming and algorithmic thinking. This green sheet lists the student learning outcomes:

In many disciplines, the first course is largely expository, or it might ask students to apply certain patterns to similar situations. In contrast, programming requires students to construct new solutions without following a predetermined plan. After all, if there was such a plan, a computer could execute it and the construction would be of negligible value. Students are, to say the least, surprised when they are asked to perform activities at level 5 of Bloom’s taxonomy in an introductory course:

Sadly, the answer is “no”. There have been efforts to reorganize the introductory sequence and start with expository material, but that hasn't worked too well. Programming is simply a requirement to progress in the major.

In addition, programming is an incredibly useful skill in many other majors. Just about anyone who has to deal with data sets would benefit from processing them their way instead of with canned procedures. Just like knowledge of calculus was indispensable for scientists and engineers a century ago, programming is no longer something left to a small minority. Well-connected organizations such as code.org and the NSF promote the idea that every student in every school should have a chance of learning how to program.

The fact of life in an introductory computer science course is that one has large numbers of students who are motivated to learn the subject matter, but many are unprepared for the difficulty of the task. Over the last ten years, the CS department has gained quite a bit of experience on how to deal with these challenges. Here is what works:

Grading, tutoring, mentoring, and lab instruction are expensive, even after having changed the course from small classes to a single lecture. We are largely able to serve our majors, but we cannot keep up with the demand from other majors.

In Spring 2013, SJSU collaborated with Udacity to produce MOOCs (massively open online courses) for elementary mathematics, statistics, and our introductory programming course. The department had three motivations in participating in this effort.

From an academic perspective, the results of the collaboration were largely positive. (See this conference presentation for a summary.) Pass rates were comparable with the brick-and-mortar course. Udacity provided substantial resources with video production and editing, including a co-instructor who presented many exercises. The technique of presenting short videos that are instantly followed by programming activities with instant feedback seemed a distinct improvement over the traditional lecture.

Note that our experience was quite different than that of an elementary mathematics course, where SJSU had a more disappointing experience with the Udacity course. By and large, our audience was engaged and hard-working, and those who were not, were given a chance to drop the course without negative consequences (other than the modest fee for the course).

However, it seems that Udacity was not satisfied with the commercial prospects of the course, and no further resources were devoted to fixing errors or improving the materials after the initial offering. Nevertheless, the videos and exercises continue to be available to anyone who wishes to use them.

In hindsight, there were some issues with having a “massively open” course. The SJSU students derived little, if any, benefit from the large cohort that followed the course for free. (It was estimated that over 15,000 students registered for the free course, in comparison to 900 SJSU students.) In particular, discussion groups were clogged with so many messages that they became difficult to use. Also, Udacity cut out the labs because they are not easily adapted for automatic grading. The department learned the value of an online offering, but also that a “small private” online course can serve our purposes better.

The department is offering a fully online version of CS46A in Spring 2014. The goal is to attract those students who are most likely to succeed in the online medium: self-motivated students with some computer skills and the ability and willingness to seek help online. It has been our estimation that about 1/3 of our CS46A audience is in that category, and indeed we have about 90 students in the online course vs. 150 students in the on-campus course.

We also advertised the course to other CSU campuses but unfortunately received no students.

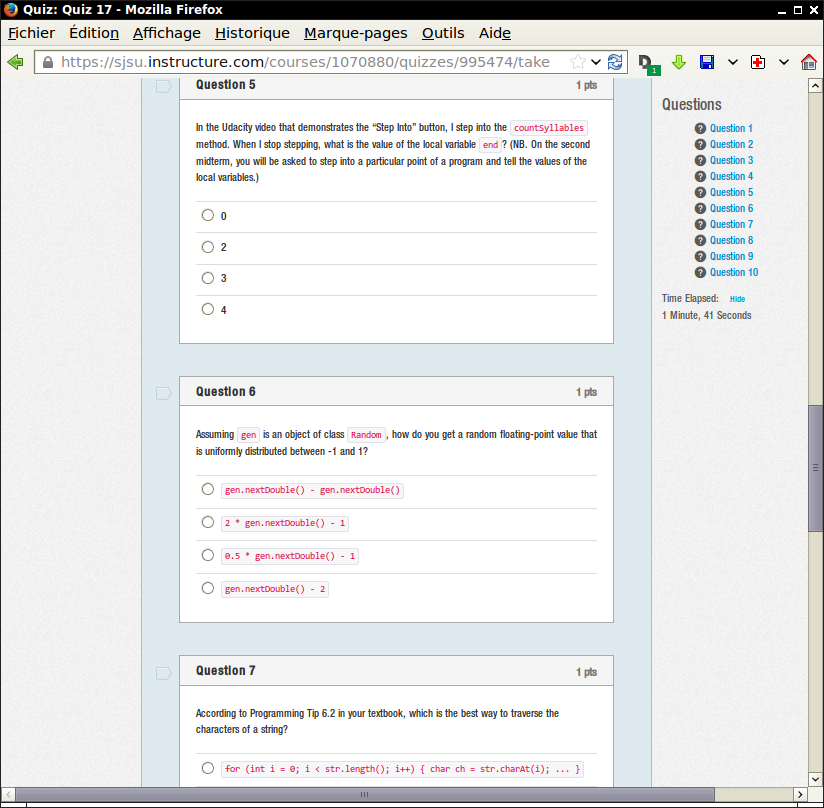

There are no lectures. Instead, students watch the Udacity videos and work through the Udacity questions. We have no access to the student activities in the Udacity platform. Instead, there are weekly quizzes that probe familiarity with the videos.

For reasons of cost and room availability, we are not able to offer scheduled or drop-in labs. We also don't see how we can require pair work, given the work schedules of the students, but we encourage it by giving a small bonus credit. Students must demonstrate their lab work to a lab instructor via screen sharing once per week.

We realize that online exercises must be refreshed every semester. All homework assignments are new and unrelated to the Udacity assignments, for which solutions are widely available.

Compared to the Udacity course, I designed many more interactive exercises. The programming assignments are more numerous and more rigorous, the quiz questions are more probing, and the labs are now required. Students are graded on these activities, and they are also expected to do the Udacity activities. Practice truly makes perfect in this course.

We are concerned about cheating in exams proctored by an online service. (I was able to cheat with ease when posing as a student in a proctored exam of the Udacity/SJSU class.) Exams are done on campus on Saturdays. I would have used the proctoring service for students from other campuses, had they materialized.

The absence of tutoring and mentoring is a concern. I had hoped to send students to a tutoring facility in the CS department, but that has been eliminated due to budget cuts. I experimented with online tutoring through Webex.

Frankly, the course (regular as well as online) has issues of gender balance and diversity. Our mentoring effors for the regular course, where we actively tried to get women and minority mentors, have so far not been very successful, and we don't yet see how we can do better online.

Note that there is no difference in the syllabus between the regular and the online course. It is our intent that students can choose either mode, depending on their preferences, and that both modes have the same outcomes.

In this section, I go into greater detail about the materials for the online “Introduction to Programming” course.

Students watch the videos from the CS046 course at Udacity. Each segment is about 2-3 minutes long, followed by an activity (usually a short program) and an answer video. Here is a typical example:

Tthis format seems far more effective than long video segments because it frequently engages the students and gives them immediate feedback whether they are comprehending the material. Because the segments mostly probe using programming examples, not multiple choice, it is possible to gauge student knowledge at a fairly deep level.

The Udacity videos fully cover the course, and I saw no need for additional lecture videos. However, at the beginning of each week, I record an introductory video. Here is a sample:

More importantly, I record videos that describe how to solve the programming assignments. In the videos, I focus on the thought process of arriving at a program design.

Each week, except over spring break, students solve three complete programming assignments. Each assignment is due as a draft on Wednesday, and as a final version on Sunday. That way, students have several days to think about the problems, as evidenced by a steady flow of questions on the discussion throughout the week.

Every Monday, solutions are made available as source code and with answer videos.

Assignments are newly created, either brand-new or a modification of a previous assignment to make it Google-proof. Assignments are automatically graded with the code-check autograder. Moss is used for plagiarism checking.

This is quite different from what used to go on in this course, when anywhere between six long and twenty short assignments were given, generally without a draft, and often reused from prior semesters. Except for the most motivated students, it was the norm to start the assignments too late to think them through properly, and to turn in code that didn't actually work. Cheating was rampant.

Now students turn in 45 assignments (or 90 submissions), and a large fraction of them work perfectly, makes it difficult to assign homework grades that aren't lopsided. Cheating has unfortunately not been eliminated.

Here is a typical assignment: http://horstmann.com/sjsu/spring2014/cs46a/week08.html.

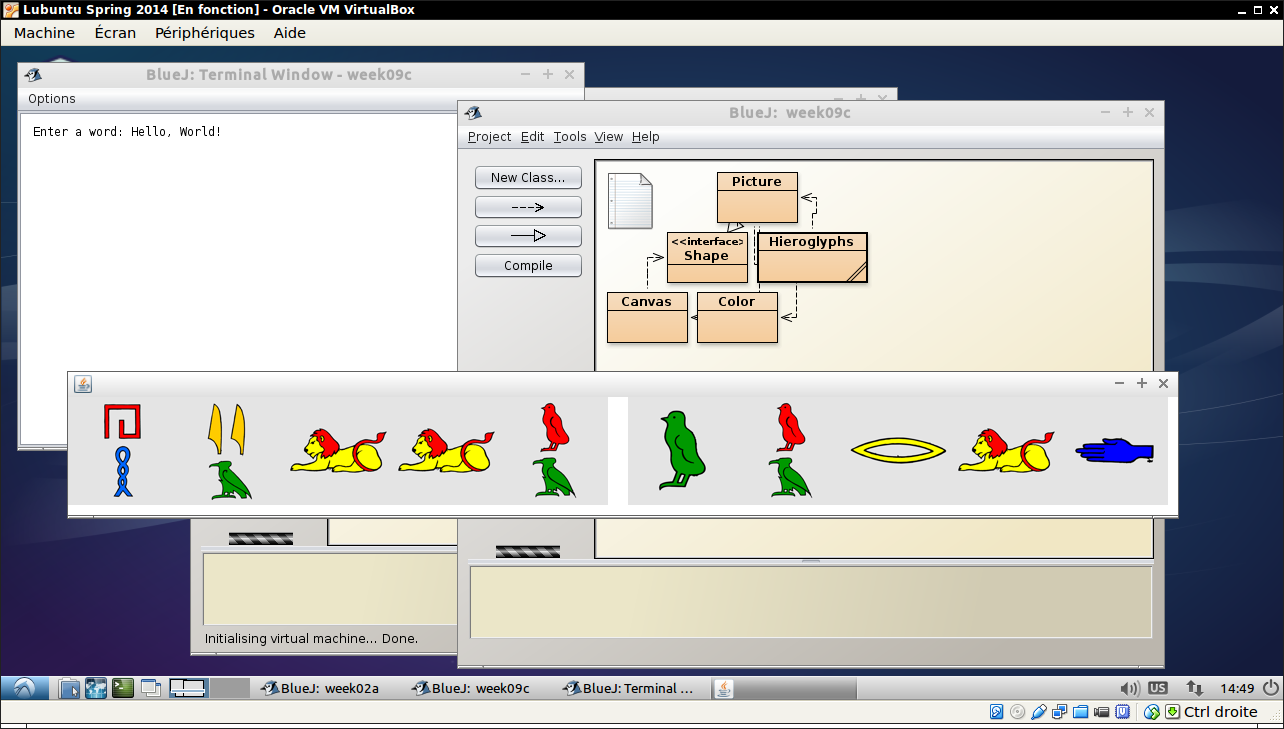

In the past, CS46A students used a wide variety of Java development environments on diverse operating systems. I was concerned about remotely supporting such a wide variety, so I provided students with a virtual machine that gives a consistent baseline for all students.

The virtual machine is based on Lubuntu Linux (a lightweight version of Ubuntu), and it can be installed on Windows, Mac, and Linux machines. It contains all software needed for the course, preconfigured and ready to run.

In the past, we used two development environments in the lab, BlueJ for introductory object-oriented programming and NetBeans for engaging projects that use the 3-D graphics from Alice. In order to simplify the tool set, I replaced those exercises with 2-D graphics, based on the library used in the Udacity lectures. For example, instead of a 3-D robot tracing a spiral or escaping from a maze, I now show a 2-D image of a Roomba:

Now the students can use BlueJ for all homework and labs. Through these changes, I dramatically reduced the pain of software installation. As a result, all students were able to start their programming homework in week 1.

The Udacity course designers insisted that the course was entirely based on videos. There was to be no textbook. However, that was not popular with students. They complained that it was difficult to use the videos for review. (Clearly, the art of notetaking has been lost in this generation.)

I decided to reverse course and to again require the textbook. To ensure that the textbook is actually used, there are quizzes twice a week. The Thursday quiz comes from the publisher-supplied testbank. But on Monday, the quiz questions are newly written and refer directly to sections in the textbook and how they relate to the Udacity videos and the homework assignments. The idea is to teach students how to navigate the textbook better. The questions give rise to a fair amount of traffic on the discussion board, so that seems to be working, at least with the more diligent students.

In order to give students supervised practice, the CS46A course has a mandatory 3-hour lab. Students work in pairs under the supervision of a lab assistant. Here is a typical lab: http://cs46labs.bitbucket.org/cs46a/lab6/index.html.

The lab component was dropped when we offered the course online through Udacity. It would have been too challenging to offer in a MOOC. But we were not happy about that. Students learn valuable higher-level design and problem solving skills in the labs that are difficult to convey through lectures and “drill and practice” assignments.

One aspect of the labs that we find helpful is for students to work in pairs. We find that two students can often solve problems that would have baffled them in isolation. (We tried larger groups, but that proved hard to manage for the lab assistants.) This semester, we decided to require labs for the online class as well. I encourage (but do not require) students to work in pairs. There is a 10% bonus for each paired lab report.

I provided several avenues for pair matching: through the discussion board, by letting the professor be match maker, by meeting at a prearranged spot, etc. I leave it to pairs to find some way to meet online or on campus.

Lab instructors set up regular office hours via Webex. Students can ask for help, and to get credit for their lab work, they need to demo it via screen sharing.

We use Piazza as a discussion forum since it is easier to use than the discussion component of our LMS, Canvas. (In fact, we only use Canvas for quizzes and grade reporting. All other material is on a public web site.)

Discussion is reasonably vigorous, but only about 20% of the class members are active participants. The rest reads posts, many with good homework hints, but is entirely passive.

style='width: 50%' src='piazza.png'

It is probably too late this semester to change the tone of the forum, but this needs improvement next semester.

One of the challenges in a large course, online or regular, is that the weaker students tend to be inconspicuous until it is too late for them to seek help. Our LMS, Canvas, makes it easy to notify those who aren't participating in quizzes and homeworks. But it has not done a good job telling instructors or students how they stand in class. The “final grade” that Canvas reports is completely out of step with actual student performance.

As a result, it has been difficult for instructors to find out which students should be referred to mentoring and tutoring. Similarly, students didn't get good feedback that would motivate them to seek help.

Fortunately, Canvas has a programming interface that allows us to access and update the data that it stores. I used that interface to implement a program that compute an accurate prediction of the final grade for each student and puts it in the gradebook. The program also provides a list of the most at-risk students to instructors. When run weekly (or twice a week at the beginning of the semester), it is effective in providing timely intervention. (Sadly, due to our dire departmental budget, mentors and tutors were not always available to help those students.)

Contributed by Dr. Rick Lumadue, Special Consultant, California State University, Office of the Chancellor